The Strategic Crisis of Static Intelligence

Modern large language models remain constrained by a kind of anterograde amnesia. They can reason impressively inside a temporary context window, but they cannot reliably consolidate real-time experience into durable systemic memory. In civic, geopolitical, and institutional settings, that is a structural weakness rather than a cosmetic limitation.

The Zero System starts from a different premise: intelligence for the real world must operate across time scales. It must learn from fast-moving signals without losing sight of slow-moving forces. It must turn transient observation into long-horizon strategy instead of freezing the model the moment deployment begins.

One engine. Every human need. The core idea is simple: dissolve the boundary between training and inference so the system keeps adapting after launch.

The Nested Learning Paradigm

Nested Learning rejects the idea that model architecture and model optimization are separate concerns. Instead, it treats them as the same principle seen at different levels of abstraction. Layers are not just stacked features; they are solutions to nested optimization problems held in relation to one another.

The bridge between classic backpropagation and this broader paradigm is associative memory: a model that recalls and updates based on internal surprise, salience, and pattern resonance rather than static lookup alone.

Three Structural Pillars

- Expressive optimizers: moving beyond simple dot-product rules toward associative memory modules that treat learning as compression and re-organization.

- Self-modifying modules: units that adapt their internal update behavior online rather than waiting for a global retraining pass.

- Continuum memory systems: memory modules that update at different rates so fast adaptation and long-term persistence can coexist.

| Feature | Traditional Deep Learning | Nested Learning |

|---|---|---|

| Architecture | Heterogeneous and static stacks | Uniform, multi-level optimization |

| Optimization | Separated from architecture | Fundamentally identical to architecture |

| Learning clock | Single centralized rate | Multi-frequency and multi-time-scale |

| Memory | Short-term and long-term handled separately | Continuum spectrum of updates |

| Adaptability | Mostly frozen after pre-training | Continual and self-modifying |

The Zero System Architecture

The Zero System operationalizes Nested Learning through a tiered model that mirrors the multi-frequency rhythms of the brain. Each layer handles a different velocity of change so rapid responses do not destabilize deep strategic goals.

Tier 3 · Intervention Points

Weekly, high-frequency interactions capture human intuition and lateral thinking through game-based scenarios such as shipboard crises, negotiation events, and time-sensitive operational problems.

Tier 2 · Trigger Mechanisms

Monthly synthesis identifies recurring causal patterns beneath the surface narrative. Trust deficits, chokepoints, resource bottlenecks, and political feedback loops become reusable mechanisms instead of one-off stories.

Tier 1 · Root Drivers

Quarterly analysis transforms those mechanisms into civilization-scale recommendations, optimizing for durable Nash equilibrium rather than tactical wins.

The Hope Architecture and the Ensemble

To prevent catastrophic forgetting, the system leans on the Hope architecture: a recurrent, self-modifying structure that uses self-referential optimization to manage its own memory retention. Memory becomes a live loop, not an archive.

Within that loop, a model ensemble handles different lenses on the same problem space.

- Claude: assumption auditing and philosophical vetting.

- GPT: adversarial stress testing and political viability.

- Perplexity: evidence grounding and historical validation.

- Replit: real-time MVP construction and tool execution.

The DUO Model

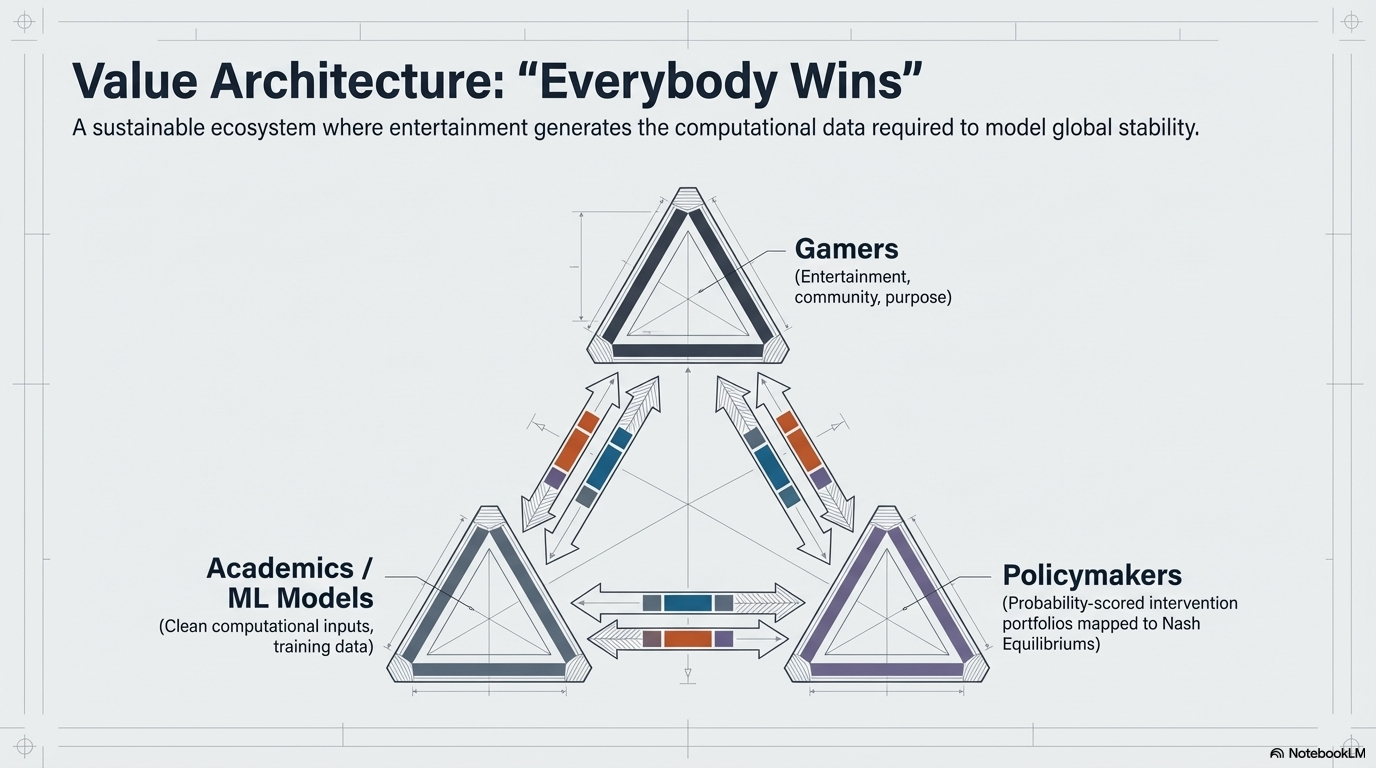

Purely algorithmic solutions usually break down in geopolitical settings because the hard part is not only calculation. It is incentive design, human nuance, ambiguity, and irrational stakeholder behavior. DUO answers that by treating intelligence as distributed across humans and machines rather than isolated inside either.

The system reduces cognitive overload through narrative abstraction. A water crisis can be reframed as a spaceship filtration breakdown. A trust collapse can be explored as a crew conflict over shared infrastructure. That translation unlocks inventive play without the paralysis of real-world baggage, then maps the useful strategies back into policy space.

Case Study: Freshwater Access and the GERD Dispute

Freshwater access is an ideal launch problem for the Zero System because it is documentable, high stakes, and governed by transboundary tensions that can be modeled as strategic equilibrium problems.

In the proposed game frame, the Epyon's water recycling system is failing. Three factions control different parts of the solution: the Command, the Engineers, and the Inhabitants. Their incentives are misaligned, trust is exhausted, and survival depends on a shared technical system none can operate alone.

That abstraction maps back into the Grand Ethiopian Renaissance Dam dispute:

- The Command: Ethiopia and upstream sovereignty.

- The Inhabitants: Egypt and downstream dependence.

- The Engineers: Sudan as the technical intermediary.

Tier 3 captures human negotiation behavior inside the game. Tier 2 extracts the reusable trust-building mechanisms. Tier 1 validates those patterns against hydrological reality and turns them into policy-grade recommendations.

Beyond Pre-Training

The future of systemic problem solving requires neural learning modules that remain alive after deployment. A model that stops learning the moment it enters the field is already in decay.

Epyon HQ represents a collaboration surface for humans and machines to work on the biggest problems available: not only to analyze them, but to keep learning through them at every scale. That is the promise of the Zero System, and the reason Captain's Log starts here.